Why Secure Coding Matters

Developers are closest to software risk

because they create the code paths attackers

eventually interact with. Even with strong

AppSec tooling, scanners and reviews happen

after code is written. That means the

cheapest and fastest way to reduce risk is

to improve secure coding behavior at the

source. When engineers consistently choose

safe defaults, validate assumptions, and

test abuse cases, vulnerability volume drops

before it reaches QA, security gates, and

production response workflows.

The risk landscape is also evolving. OWASP

Top 10 categories such as A01 Broken Access

Control and A03 Injection still drive major

incidents, but today they appear inside API

orchestration, background jobs, and cloud

service connectors, not only classic web

forms. A route handler that trusts a tenant

ID from the client, or a background worker

that processes untrusted serialized data,

can create high-impact exposure even when

perimeter controls look healthy.

Secure coding matters for developer

productivity too. Repeated security defects

create context switching, rollback pressure,

and late-cycle refactoring that burns sprint

capacity. Teams that build secure patterns

into normal delivery spend less time in

emergency patch mode and more time on

product outcomes. In practical terms, secure

coding is not a cost center for developers.

It is a quality multiplier that lowers

operational drag.

This is why high-performing engineering

organizations treat secure coding as a core

software skill, similar to testing,

observability, and performance profiling.

They train engineers with realistic defects,

require test-backed remediations, and share

proven secure patterns across teams. The

result is not perfect code. The result is a

repeatable engineering system that catches

and fixes risky decisions earlier.

What Traditional Training Gets Wrong

Many developers have experienced mandatory

secure coding courses that explain concepts

but do not improve implementation quality.

The reason is simple: passive formats teach

recognition, not execution. You can agree

that insecure deserialization is dangerous

and still merge unsafe object handling logic

under release pressure because you never

practiced the defensive pattern in code.

Another issue is that examples are often too

small or too clean. A single isolated code

snippet in a slide ignores real complexity:

framework middleware order, noisy logs,

brittle tests, legacy helpers, and unclear

ownership boundaries. Developers need lab

environments where they navigate this

complexity and still ship a secure fix.

Without that, training knowledge fades when

real tickets arrive.

Traditional courses also overemphasize final

answers and underemphasize debugging

process. In real engineering, secure coding

decisions happen through investigation:

tracing data flow, validating trust

boundaries, reviewing policy checks, and

iterating tests. If training does not model

that process, it cannot build confidence for

real incidents or difficult pull requests.

Finally, most legacy programs measure only

completion. Developers see training as

something to finish, not something to apply.

A better model measures behavior change:

defect recurrence, remediation quality, and

exploit resistance over time. That kind of

measurement makes secure coding relevant to

both individual engineers and team leads.

How SecDim Solves This

SecDim gives developers practice in the form

they already understand: solve engineering

problems in code. Challenges are built

around realistic vulnerabilities inspired by

production incidents. Learners identify

attack paths, reproduce failures, implement

defensive changes, and validate outcomes

using tests. This method trains secure

coding as a hands-on technical discipline,

not as policy memorization.

The platform is designed for incremental

depth. Teams can start with

interactive vulnerability wargames

to build intuition quickly, then move into

structured

in-repository secure coding courses

for deeper implementation patterns.

Engineers can reinforce context with

real-world security case studies and

postmortems

that connect coding mistakes to incident

outcomes.

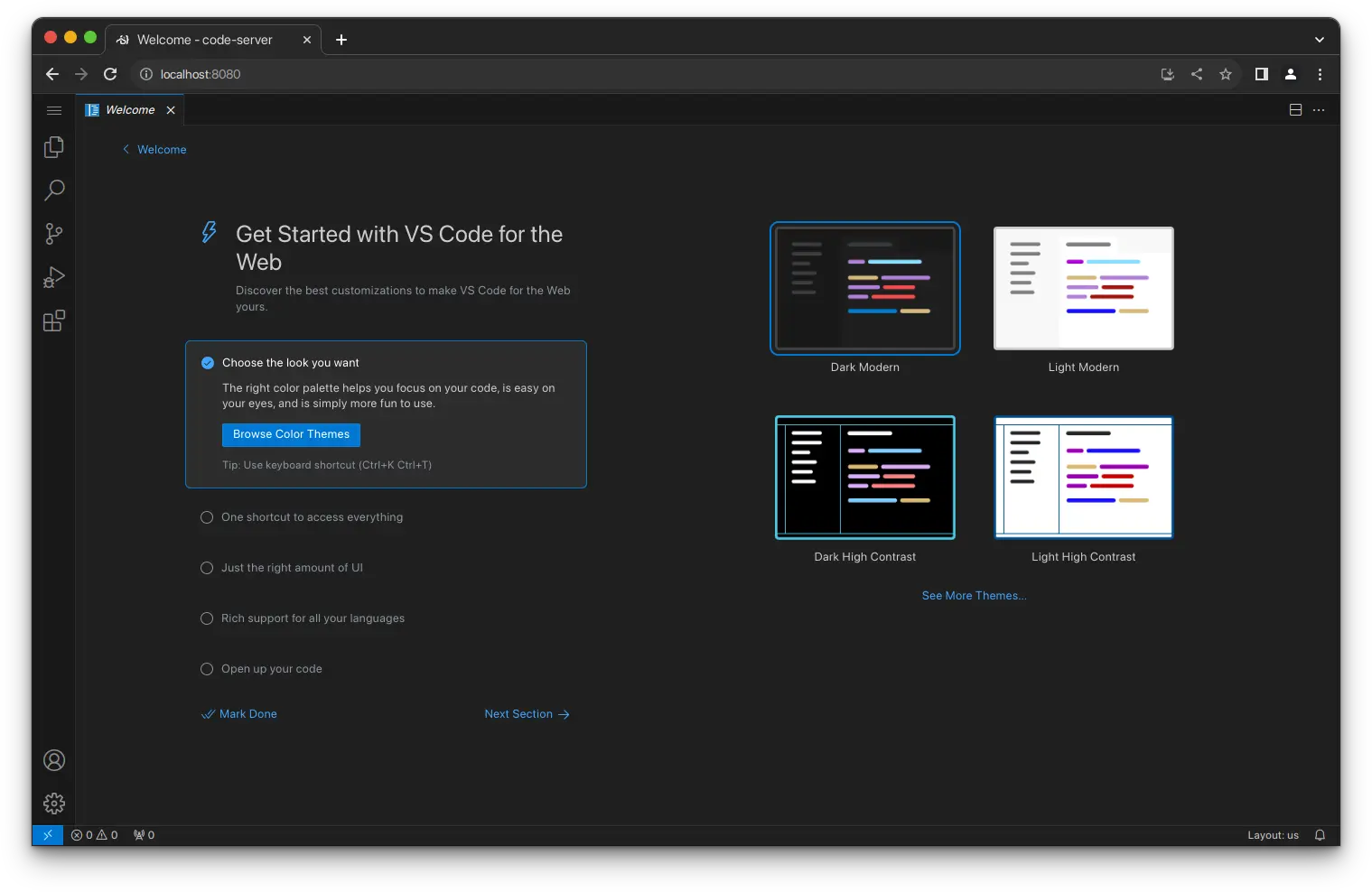

SecDim also supports developer workflows.

You can use familiar tooling, write and run

tests, and compare vulnerable and fixed

states. Instead of guessing whether a patch

is "secure enough," developers can verify

that exploit conditions are blocked while

functional requirements still pass. This

improves confidence during code review and

reduces last-minute security rework.

For team leads and AppSec partners, results

are measurable. You can track how quickly

engineers remediate specific vulnerability

classes, where repeat issues occur, and

which defensive patterns need reinforcement.

This creates a shared language between

developers, security engineers, and managers

focused on implementation quality rather

than completion reports.

This also improves collaboration during

sprint execution. When developers, tech

leads, and AppSec reviewers use the same

challenge vocabulary, security feedback

becomes concrete and actionable. Instead of

comments like \"harden input validation,\"

reviewers can reference specific exploit

paths and known secure patterns validated in

labs. Over time, teams build reusable

implementation templates for common risks

such as tenant scoping, safe output

encoding, strict deserialization, and

defensive error handling. That consistency

reduces review friction and helps developers

ship secure fixes faster under real release

pressure.

Practice Secure Coding Where You Build

Software

Replace passive lessons with realistic

challenge workflows that improve how

developers write, review, and ship code.

Real-World Example: Broken Access Control in

a Project API

A common developer mistake is trusting user

input for object ownership decisions.

Imagine an API endpoint that returns project

details by ID. It authenticates the user but

does not enforce tenant or ownership checks.

In many teams, this slips through review

because the endpoint "requires auth," yet it

still enables Insecure Direct Object

Reference behavior, mapped to OWASP A01

Broken Access Control.

// Vulnerable: authenticated but missing ownership authorization

router.get("/api/projects/:projectId", async (req, res) => {

const project = await prisma.project.findUnique({

where: { id: req.params.projectId }

});

if (!project) {

return res.status(404).json({ error: "Not found" });

}

return res.json(project);

});

In a lab, developers first reproduce the

issue by querying IDs that belong to another

account. They then patch the endpoint by

binding access to the authenticated user and

organization context. The secure fix is not

just adding a conditional in one handler; it

involves setting a repeatable authorization

pattern that other endpoints can reuse.

// Fixed: authorization scoped to authenticated tenant and user

router.get("/api/projects/:projectId", async (req, res) => {

const project = await prisma.project.findFirst({

where: {

id: req.params.projectId,

tenantId: req.user.tenantId,

members: {

some: { userId: req.user.id }

}

}

});

if (!project) {

return res.status(404).json({ error: "Not found" });

}

return res.json(project);

});

The final step is regression coverage.

Developers add tests that verify users

cannot access projects outside their tenant

and that valid users still retrieve their

own data. This creates a durable defense

layer. Future refactors are less likely to

reintroduce the same access control mistake

because the test suite captures the security

contract.

The same exercise can branch into adjacent

vulnerabilities developers face daily:

insecure JWT claim handling, unsafe markdown

rendering leading to stored XSS, and SSRF

from image-fetching microservices. By

practicing full exploit-to-fix loops,

engineers build practical pattern memory

they can apply in active product work.

SecDim vs Traditional Secure Coding Training

| Evaluation Area |

Traditional Training |

SecDim for Developers |

|

Passive slides vs hands-on

labs

|

Primarily video or slides

with limited code execution.

|

Challenge-based labs where

developers exploit, debug,

patch, and retest code.

|

|

Completion metrics vs

behavior metrics

|

Learning management system

completion and quiz status.

|

Implementation behavior

data, remediation quality,

and recurrence tracking by

topic.

|

|

Knowledge vs injection-rate

reduction

|

Knowledge checks

disconnected from active

repositories.

|

Practical work that drives

measurable reductions in

injection and similar defect

patterns.

|

|

Static compliance vs

measurable control

effectiveness

|

Static attestations proving

attendance.

|

Test-backed evidence that

secure coding controls work

in realistic scenarios.

|

|

Developer workflow alignment

|

Detached from daily coding

and code review processes.

|

Built around repository,

testing, and engineering

tool workflows developers

already use.

|

| Content relevance |

Generic examples with weak

mapping to modern stacks.

|

Real-world vulnerability

scenarios mapped to current

software patterns and OWASP

categories.

|

How It Works

Developers usually get the best results when

secure coding practice is embedded in normal

engineering cadence. A practical path looks

like this:

-

Pick one high-risk topic.

Start with a defect class your team sees

often, such as access control failures,

injection, or unsafe file processing.

-

Run focused challenges.

Use

hands-on wargame scenarios

to build exploit and remediation

intuition quickly.

-

Deepen with repository

labs.

Move into

secure coding repository

exercises

that mirror realistic engineering

structure, dependencies, and test

suites.

-

Capture secure patterns.

Convert successful fixes into team

checklists, helper libraries, and review

guidelines so improvements persist.

-

Reinforce with case

studies.

Use

incident-driven blog content

to connect your coding choices with

real-world exploit outcomes.

-

Review outcomes quarterly.

Track repeated defect trends and adjust

challenge focus so training stays

aligned with current risk.

This structure keeps secure coding

practical, measurable, and relevant for both

individual engineers and team-wide quality

goals.

Teams can further improve results by linking

lab outcomes to lightweight engineering

rituals. Examples include adding a short

security check in design reviews for new API

endpoints, keeping secure coding snippets in

internal docs, and rotating developers

through security champion responsibilities.

These practices turn one-off training

sessions into a repeatable operational

discipline. As teams adopt the same

vocabulary and defensive defaults, secure

coding quality becomes easier to sustain

even when projects, frameworks, and

personnel change.

FAQ

What does secure coding for

developers include?

It includes hands-on practice for

identifying vulnerabilities,

implementing secure fixes, and

validating defenses with repeatable

tests in realistic codebases.

Which vulnerabilities should

developers prioritize first?

Most teams begin with high-impact,

recurring categories such as broken

access control, injection, insecure

authentication logic, and unsafe API

input handling.

How do secure coding examples

improve code review quality?

Developers who practice exploit and

remediation paths recognize risky

patterns faster in pull requests and

propose more reliable defensive

alternatives.

Can developers train without

changing their toolchain?

Yes. Effective secure coding labs

use familiar workflow concepts such

as repositories, IDE debugging, and

test execution, so adoption friction

stays low.

How often should teams run secure

coding practice?

A consistent monthly or

sprint-aligned cadence is better

than annual events, because it

reinforces behavior before insecure

patterns become normalized.

Where can I find more practical

secure coding material?

Use

the secure coding wargame,

in-repository learning

tracks, and

the SecDim security blog

to keep skills current.

Build Practical Secure Coding Skills

Across Your Engineering Team

Use realistic labs and measurable

outcomes to improve secure

implementation quality without

interrupting delivery momentum.