Why Secure Coding Matters

Enterprises now run hundreds of services,

APIs, data pipelines, and event-driven

workloads that change daily. That pace is

good for feature velocity, but it also

multiplies the number of places where one

insecure coding decision can expose data,

break authorization boundaries, or create

code execution risk. In most incidents,

attackers do not need zero-days. They reuse

predictable weaknesses that are already

mapped in common frameworks such as OWASP

Top 10, including A01 Broken Access Control,

A03 Injection, A05 Security

Misconfiguration, and A10 Server-Side

Request Forgery.

Leadership teams often invest in scanners,

WAF policies, cloud controls, and incident

response, but they still discover the same

coding flaws in sprint after sprint.

Traditional tooling finds defects late,

usually after merge or deployment, when

remediation costs are higher and release

pressure is strongest. Secure code training

closes that gap by changing behavior at the

source: the design and implementation

choices developers make before risky code is

committed. That is why mature programs treat

training as a control that must produce

measurable outcomes, not as awareness

content that only proves participation.

The business impact is direct. Fewer severe

defects reaching production means lower

incident likelihood, reduced time spent on

emergency patching, and stronger customer

trust during vendor security assessments.

For CISOs and engineering directors, this

translates into clearer board-level

reporting: not "500 engineers completed a

module," but "injection-style coding errors

dropped by 38% in target repositories over

two quarters." That shift from activity

metrics to risk metrics is the reason

enterprise secure code training deserves a

dedicated strategy and an execution model

designed for software teams.

What Traditional Training Gets Wrong

Most secure coding programs fail because

they are optimized for content delivery, not

for engineering behavior change. They use

annual videos, static e-learning decks, and

short multiple-choice quizzes that test

memory, not implementation. Developers can

pass these courses without opening an IDE,

writing a unit test, or debugging a single

vulnerable branch. That mismatch creates

confidence in reporting dashboards while

leaving real code quality unchanged.

The second failure is context. A generic

deck that explains SQL injection or broken

authentication in abstract terms does not

reflect how your teams actually build

services. Engineers need realistic

constraints: deadlines, legacy code,

framework quirks, and integration pressure.

Without context, lessons do not transfer to

day-to-day pull requests. The result is

familiar in AppSec programs: same bug class,

same team, same remediation loop.

The third failure is measurement. Legacy

programs report completion percentage,

average quiz score, and policy attestation.

Those are useful for audit evidence, but

they do not answer operational security

questions. Did developers stop concatenating

SQL in query builders? Did authorization

checks move from controller middleware into

enforced service-level rules? Did API teams

eliminate insecure deserialization patterns

in critical paths? If your training stack

cannot answer these questions, it is a

compliance artifact, not a security control.

Finally, traditional models often separate

security learning from engineering

leadership. Security teams purchase courses,

HR assigns completion deadlines, and

managers only see red or green completion

statuses. No one owns the link between

learning and code quality outcomes.

Effective secure code training requires

shared ownership: security teams define risk

priorities, engineering leaders enforce

execution, and data from practical labs

feeds both groups.

How SecDim Solves This

SecDim is designed as a developer-first,

measurable secure code training platform.

Instead of watching passive content,

engineers solve realistic attack-and-defence

labs that reflect how vulnerabilities are

introduced and fixed in production systems.

Learners use familiar workflows, including

cloning repositories, running tests,

patching vulnerable code, and validating

remediations. This format creates practice

under the same pressure patterns found in

delivery environments.

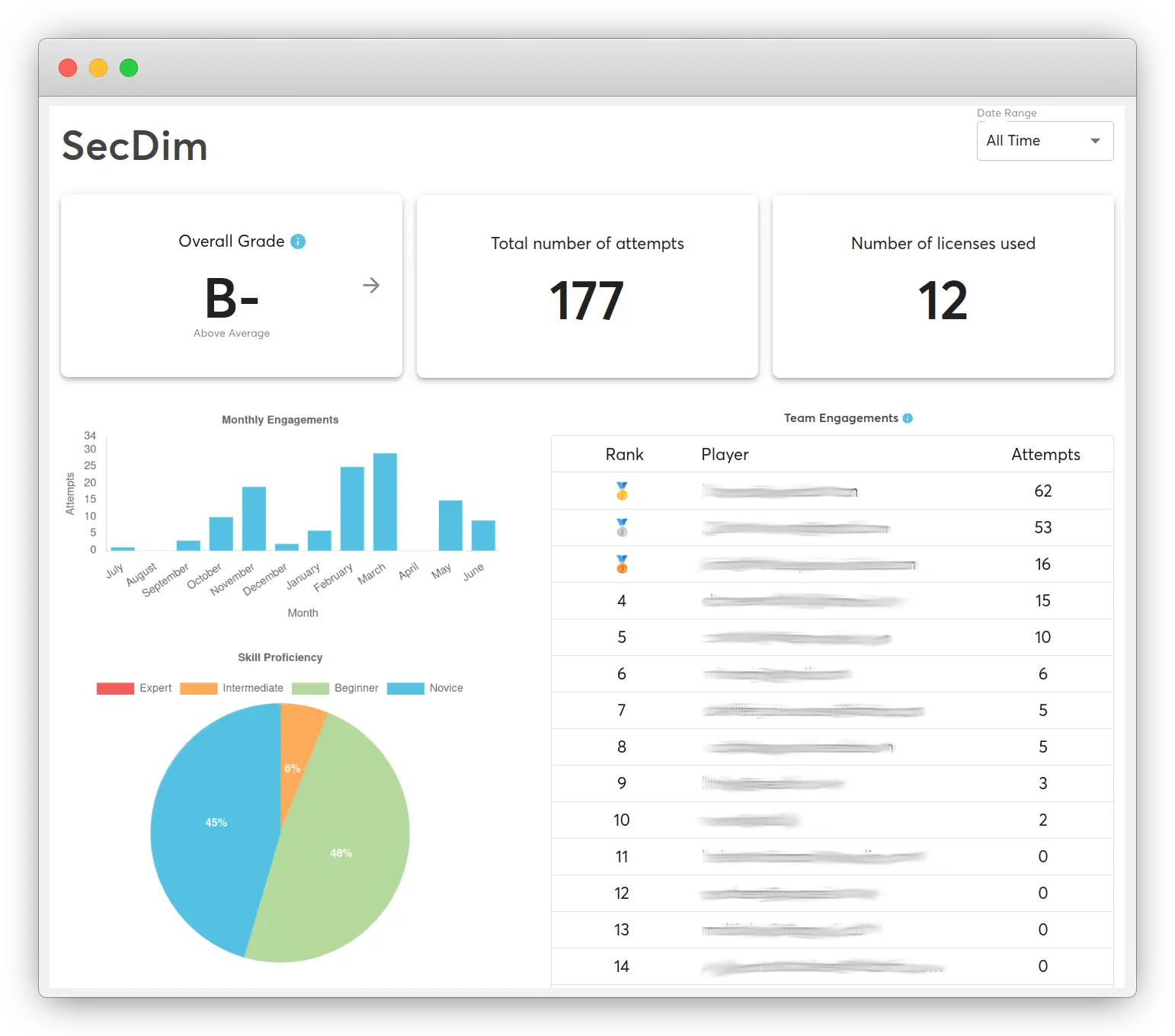

For security leaders, the core advantage is

measurable behavior data. The platform does

not stop at completion status. It tracks how

developers approach vulnerabilities, which

defensive patterns they apply, and how

reliably they prevent regression in repeat

scenarios. That data can be aggregated by

team, role, and topic to support risk

reviews, security program planning, and

board reporting.

For engineering managers, rollout is

practical. Teams can start with baseline

challenges aligned to high-risk categories,

then progress into role-specific pathways

for API engineers, backend teams, full-stack

teams, and platform teams. Managers can pair

this with existing SDLC controls such as

SAST, code review checklists, and release

gates. The outcome is a single learning

loop: identify vulnerability patterns,

practice secure fixes, and verify

improvement in both labs and production

repositories.

If you run instructor-led programs, SecDim

also supports security champions and

trainers who need structured lab execution.

You can see details on

SecDim for trainer-led secure coding

programs, and evaluate enterprise deployment

options on the

secure code learning platform page. When you are ready to evaluate fit for

your environment, use the

secure code training demo workflow

to define pilot scope, metrics, and success

criteria.

Move Beyond Completion Metrics

See how your organization can run secure

code training as a measurable control

with clear behavior outcomes for

engineering and security leadership.

Real-World Example: Injection in an Internal

Admin API

Consider an internal admin endpoint used by

operations teams to search users by email.

Because the endpoint is "internal," the team

skips strict validation and builds SQL using

string concatenation. During a red-team

exercise, an attacker chains compromised

credentials with this endpoint and extracts

privileged user data. This is a common

pattern mapped to OWASP A03 Injection,

especially when trust assumptions around

"internal" traffic replace secure coding

controls.

// Vulnerable: SQL query built from unsanitized input

app.get("/admin/users", async (req, res) => {

const email = req.query.email;

const sql = `SELECT id, email, role FROM users WHERE email = '${email}'`;

const rows = await db.query(sql);

res.json(rows);

});

In training, developers exploit this

endpoint first so the risk is tangible. They

see how payloads like

' OR '1'='1

affect query logic, then correlate the

impact with data exposure and privilege

escalation paths. That attack step matters:

teams that only read about injection often

underestimate exploitability in their own

services.

// Fixed: parameterized query and explicit field allowlisting

app.get("/admin/users", async (req, res) => {

const email = String(req.query.email || "").trim();

if (!/^[^\s@]+@[^\s@]+\.[^\s@]+$/.test(email)) {

return res.status(400).json({ error: "Invalid email format" });

}

const sql = "SELECT id, email, role FROM users WHERE email = $1";

const rows = await db.query(sql, [email]);

return res.json(rows);

});

SecDim labs then require a defensive

completion path: implement a parameterized

query, validate input format, run regression

tests, and pass attack-oriented test cases.

Completion only counts when exploit traffic

no longer succeeds and business logic still

works. This "attack to test to patch"

sequence directly trains practical behavior

that translates to production pull requests.

The same scenario can be extended into other

OWASP categories. Teams add authorization

checks to ensure only privileged roles can

call the endpoint (A01 Broken Access

Control), enforce audit logging for

sensitive access (A09 Security Logging and

Monitoring Failures), and validate outbound

data handling to reduce accidental leakage.

By connecting one defect to adjacent control

failures, secure code training becomes a

system-level exercise rather than a single

bug fix.

SecDim vs Traditional Secure Coding Training

| Evaluation Area |

Traditional Training |

SecDim Secure Code Training

|

|

Passive slides vs hands-on

labs

|

Annual slide decks and video

modules with limited

practice.

|

Live attack-and-defence labs

where engineers debug,

exploit, and patch real

code.

|

|

Completion metrics vs

behavior metrics

|

Completion percentage and

quiz score reporting.

|

Behavioral evidence

including exploit

resistance, fix quality, and

repeat-defect reduction by

team.

|

|

Knowledge vs injection-rate

reduction

|

Focus on awareness and

terminology retention.

|

Focus on measurable

reduction of injection and

related coding defects in

engineering workflows.

|

|

Static compliance vs

measurable control

effectiveness

|

Audit-ready attestations

with weak linkage to

operational security

outcomes.

|

Evidence of control

effectiveness tied to real

vulnerability classes and

remediation behavior over

time.

|

| Role alignment |

One-size-fits-all training

for all technical roles.

|

Role-specific pathways for

platform, API, backend,

frontend, and DevSecOps

teams.

|

| Leadership visibility |

Reports oriented around LMS

administration.

|

Dashboards built for

security and engineering

leadership decision-making.

|

How It Works

A practical enterprise rollout does not

require replacing your existing AppSec

program. SecDim is most effective when

integrated with your current delivery and

governance model. A typical launch sequence

includes the following steps:

-

Define risk priorities.

Security and engineering leadership

identify the vulnerability classes that

create the highest business risk in your

environment, often using incident data,

bug backlog trends, and threat modeling

outcomes.

-

Run baseline assessments.

Teams complete initial labs to establish

current behavior across high-priority

topics such as injection, broken access

control, authentication, and API input

handling.

-

Assign targeted pathways.

Learners are mapped to role-specific

challenge sets so each team spends time

on vulnerabilities they are most likely

to introduce in production.

-

Measure fix quality.

Training outcomes capture not only

whether a challenge is solved, but also

whether the remediation pattern is

robust and resistant to variant attacks.

-

Review with leadership.

Engineering managers and security

leaders review behavior trends, identify

teams needing support, and prioritize

follow-up labs based on observed

weaknesses.

-

Expand to continuous

learning.

Successful pilots evolve into quarterly

or release-aligned cycles, turning

secure code training into a consistent

capability rather than an annual event.

This model supports both enterprise security

programs and trainer-led enablement.

Organizations that rely on internal

champions can pair the same labs with

facilitator workflows from

SecDim for security trainers, while central teams can evaluate broader

deployment features from

the platform overview for companies.

FAQ

What is secure code training?

Secure code training teaches

developers to identify, exploit, and

remediate vulnerabilities in working

code so they build durable defensive

habits instead of memorizing theory.

How is enterprise secure code

training different from awareness

programs?

Enterprise secure code training is

tied to measurable engineering

outcomes. It focuses on defect

reduction in real workflows, while

awareness programs usually focus on

policy understanding and completion.

How do secure coding labs support

compliance requirements?

Labs provide practical evidence that

teams can apply required controls in

code. This complements compliance

attestations with operational proof

of secure behavior.

What metrics should CISOs track for

secure code training?

Useful metrics include repeated bug

class reduction, exploit resistance,

remediation quality, and trend data

by team or product area. Completion

rates alone are insufficient.

Can secure code training fit busy

engineering teams?

Yes. Programs are most effective

when delivered in focused sessions

aligned to sprint cadence, with

role-specific labs that map directly

to active engineering

responsibilities.

How do we start a pilot with SecDim?

Start by defining target teams,

high-priority vulnerability classes,

and success criteria. Then

book a demo for secure code

training

to design a pilot with measurable

milestones.

Ready to Run Secure Code Training That

Produces Measurable Outcomes?

Replace passive training with practical

labs and behavior-based reporting that

both engineering leadership and security

teams can trust.